Luminance and radiance

"Brightness" is much more complicated than I thought

I'm currently speedrunning analog electronics for the Late Mate project1, and one thing I had to figure out is how much current the photodiode I chose can generate. It happened to be a small rabbit hole inside the larger rabbit hole.

When used in a sensor2, the photodiode acts like a tiny solar cell, generating current3. This current is picked up by a transimpedance amplifier and transformed into voltage our microcontroller can read with an ADC. The more light hits the sensor, the more voltage gets to the microcontroller, the higher goes the number that the firmware can read.

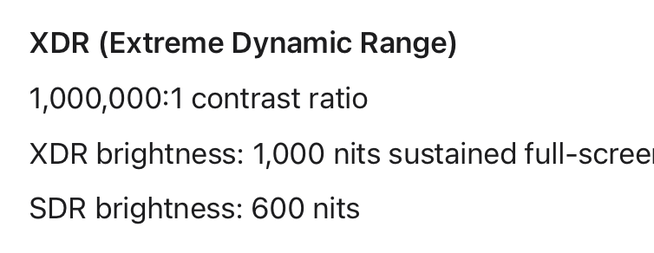

To design the amplifier, I need to calculate the maximum current it will receive from the photodiode. The current depends on the photodiode and the amount of light hitting it. How bright a computer monitor is, really? Should be easy to find! It's right there!

Except computer monitors are made for humans. This means their brightness is always quoted in "nits" or "candelas per square metre". Which are units of "illuminance". What is "illuminance", I asked myself.

Next thing I know I stumbled upon a very helpful presentation explaining that illuminance is "how bright something looks to humans", while "radiance" is "how much radiated energy hits the surface". A bright ultraviolet source can be very radiant, but not at all luminant!

This means converting between the two is… hard? I need the energy part, but I would need to know what wavelengths computer monitors emit to calculate the energy from visible brightness. Thankfully, a bunch of smart people have mapped sunlight radiance to luminosity. Screens don't really match the sunlight spectrum, but it's close enough for a rough guess.

Huge success!

I hear you asking: Dan, couldn't you just measure? Yep, I could just measure. I will measure. But first we'll order our first batch of PCBs, and those will use the guesstimated number.

Next speedrun: KiCAD.

-

One problem we have is being fast ourselves. The response time we're targeting is 500μs, corresponding to 2khz refresh rate, a comfortable margin over 540hz of the fastest monitor on the market. There are many digital sensors on the market, sensing the light and handing out a number over a digital protocol, but there are no that are both fast, reasonably cheap, and available in stock at our PCB vendor of choice. Therefore I design our own. ↩

-

In photovoltaic mode. We don't need that much bandwidth by TIA standards, I believe it's fine to just leave the diode zero-biased. ↩

-

The mode is called "photovoltaic", yet it generates current! They should've called the mode "photoamperic". ↩